Google Knows How To Teach!

“Enough is enough! I’m so done with it!” That’s how the voice inside my head yelled at me after grinding it for hours and days of learning Machine Learning. Though I am used to that, this one sounded serious. Really serious! “Umm, ok. What do you want to do then?” I asked rather politely.Hardly did I finish saying those words before I got the response, “Grab some data. Run some code. Do some analysis. Show me some plots, graphs”.As it must be evident from those words, my inner self is not fed up with Machine Learning. It is fed up with too much studying/learning and so less trying/implementing. So I browsed through the saved list of kernels, notebooks, GitHub repos and blog posts to do some hands-on and I chose this — Spooky Author Authentication!

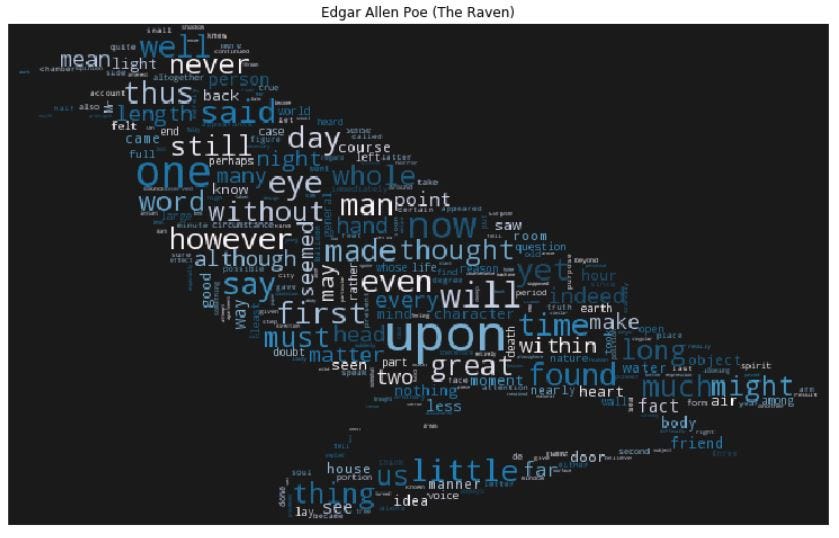

For the next two hours or so, we were busy working on that Kaggle problem. Actually, I found a very useful notebook here for it in Kaggle which I was following along. I love word-clouds and after going through this notebook, I came to know how to make one!

The Raven word-cloud, with font size varying according to the frequency of the words

As I was feeling joyful looking at those artful word-clouds, my phone buzzed for some Twitter notification. And this is what I found in a retweet :

We're sharing resources for everyone to learn more about AI, including a "Machine Learning Crash Course" which over 18K Googlers have enrolled in! #GoogleAI https://t.co/vCiw5QmNLe

— Sundar Pichai (@sundarpichai) March 1, 2018

In the next second, I was on the blog post and from there I reached the crash course(here). Three things followed next: One, I noticed the videos were not playing. I reloaded, tried different modules and then switched to another browser from Chrome and it worked. The problem was with the ad-blocker extension on Chrome. When it’s disabled, the videos worked fine. I used the Feedback button to let them know about the issue. Two, the list of topics on the left covers many important topics of ML and my attention started to grow. Three, my angry inner voice returned, “Reducing loss, generalization, logistic regression, neural networks…another word of theory and I am going to fire and fry the neurons in your brain and I am serious”. This time, however, I responded differently, “I’m nooot listening to you nooow!” When two of the things you love come together(ML and Google), you can’t stop yourself from grabbing it with both hands. In the next few hours, I was busy watching videos, reading notes, running exercises and finished half of the crash course. And guess what, it wasn’t just me who got glued to it. My complaining, yelling, angry inner voice joined too.

Google’s Machine Learning crash course will now be on my list of recommended resources for Machine Learning. Though it calls itself a crash course, anyone who finishes all the course content that includes videos, notes, exercises and playground tasks realises it is a lot more than a crash course. It not only made me know many things better but also helped in understanding few methods and concepts that I wasn’t aware of.

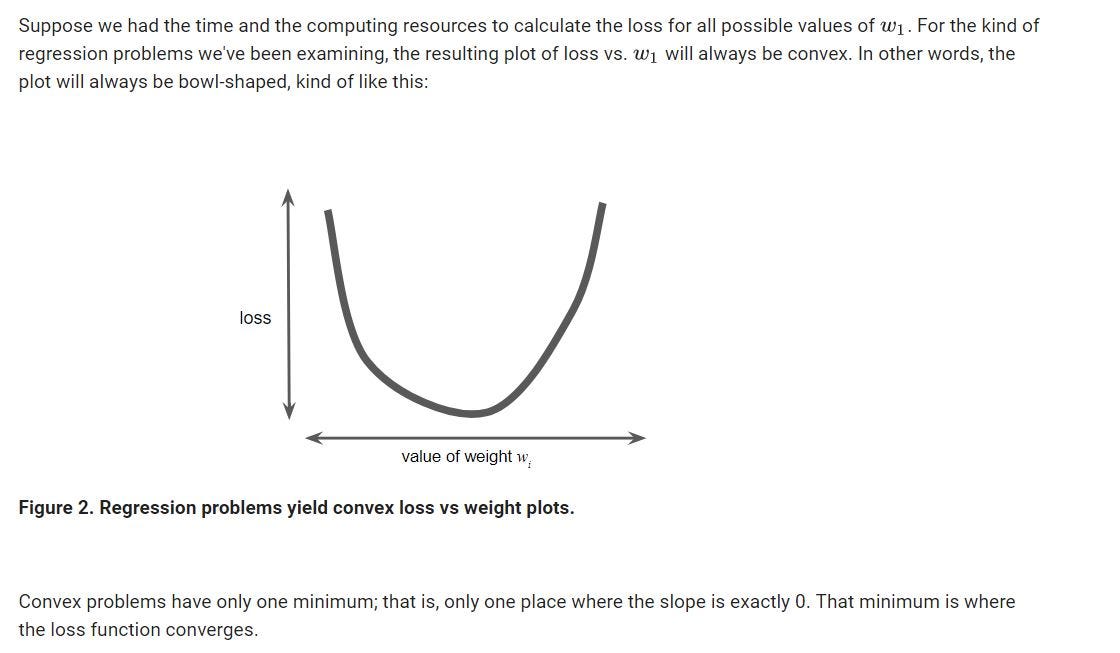

“Dude, this is what convex means! You heard about it in all those courses and wondered what it is” — my inner voice after it joined me and got glued to this course.

This isn’t the first resource I found really helpful with Machine Learning from Google. Jason Mayes Machine Learning 101 deck has very illustrative slides that explain the concepts of ML pretty well. It has hundred and one slides of very good Machine Learning content with an excellent design and very good examples.

Getting started with #ML? Want a deeper understanding, or maybe just plain confused? Check out my #MachineLearning 101 deck! This deck is a collection of knowledge I gathered over 2 years of reading many many articles so you don't have to. https://t.co/r1ayQTkWoE pic.twitter.com/GB9GpAwg8M

— Jason Mayes (@jason_mayes) December 6, 2017

Examples make understanding easier. And that’s exactly what Martin Gorner did with his few hours of sessions on Deep Learning(here). Though this isn’t as big as any MOOC on neural networks, Martin explains so well how a neural network works by breaking it down and showing how each part of it functions. In a couple of hours time, you will understand how the data is fed into a network, how the weights and biases are added, why we need activation functions, how to improve accuracy with regularization, drop out and many other useful techniques. This also helped me with understanding how TensforFlow works.

Besides all these resources, there are many others which I keep finding from time to time when I go through some of Google’s websites. Google’s Tech Dev Guide and Learn With Google AI have very good resources, both for learning and preparing for interviews. In the blog post Rules of Machine Learning, the author lists out 43 rules, each of those explained with an example of how it’s implemented or used in some of Google’s products like Google Play, Google Plus. Google Codelabs is another very useful resource that has easy to follow tutorials for a range of Google products and technologies. Google’s blog pages(like this and this) are also good sources to find some very interesting articles and updates.

With the availability of hundreds of courses through MOOCs, Youtube, blogs and many others which come from universities, scholars, researchers, students and bloggers, it’s also great to have some resources to learn, that come from the tech companies. And Google seems to be doing that really well. I wish Google and other tech companies come up with more of these open resources for anyone who’s willing to learn.

Though most of the resources listed here are on Machine Learning which is what I have been living on, close to the past one year, I have also come across many resources for other technologies and products. Exploring Google developers and other Google’s sites will lead to invaluable resources to learn and practice.

Did you find this post useful? Head over here and give it a clap(or two 😉 ). Thanks for reading!!

Leave a Comment